AI Detection, Part 1

Can an AI detect the real thing?

In reaction to the Shy Girl controversy, I decided to check if an ‘AI detector’ can, indeed, detect AI and if it can deliver false positives. Keep in mind that this is a sample—a mere spot check—yet I think it shows some edifying results.

I used the first AI detector that Kagi showed me: ZeroGPT (supposedly the top search result).

Then, I copied and pasted the first couple of paragraphs1 of ten well-known works that are in the public domain into Zero GPT.

Correctly identified as human (0% AI-generated):

Moby-Dick (1851) by Herman Melville;

The Grapes of Wrath (1939) by John Steinbeck;

Finnegans Wake (1939) by James Joyce;

Identified as ‘most likely human’ (“Your text is likely human written, may include parts generated by AI/GPT”):

The Great Gatsby (1925) by F. Scott Fitzgerald was analysed as 9.3% AI/GPT generated;

To Kill a Mockingbird (1960) by Harper Lee was analysed as 25% AI/GPT generated;

Jane Eyre (1897) by Charlotte Brontë was analysed as 27.2% AI/GPT generated;

The false positives:

The Adventures of Huckleberry Finn (1876) by Mark Twain was analysed as 64.8% AI/GPT generated2;

Alice’s Adventures in Wonderland (1865) by Lewis Caroll was analysed as 89.5% AI/GPT generated3;

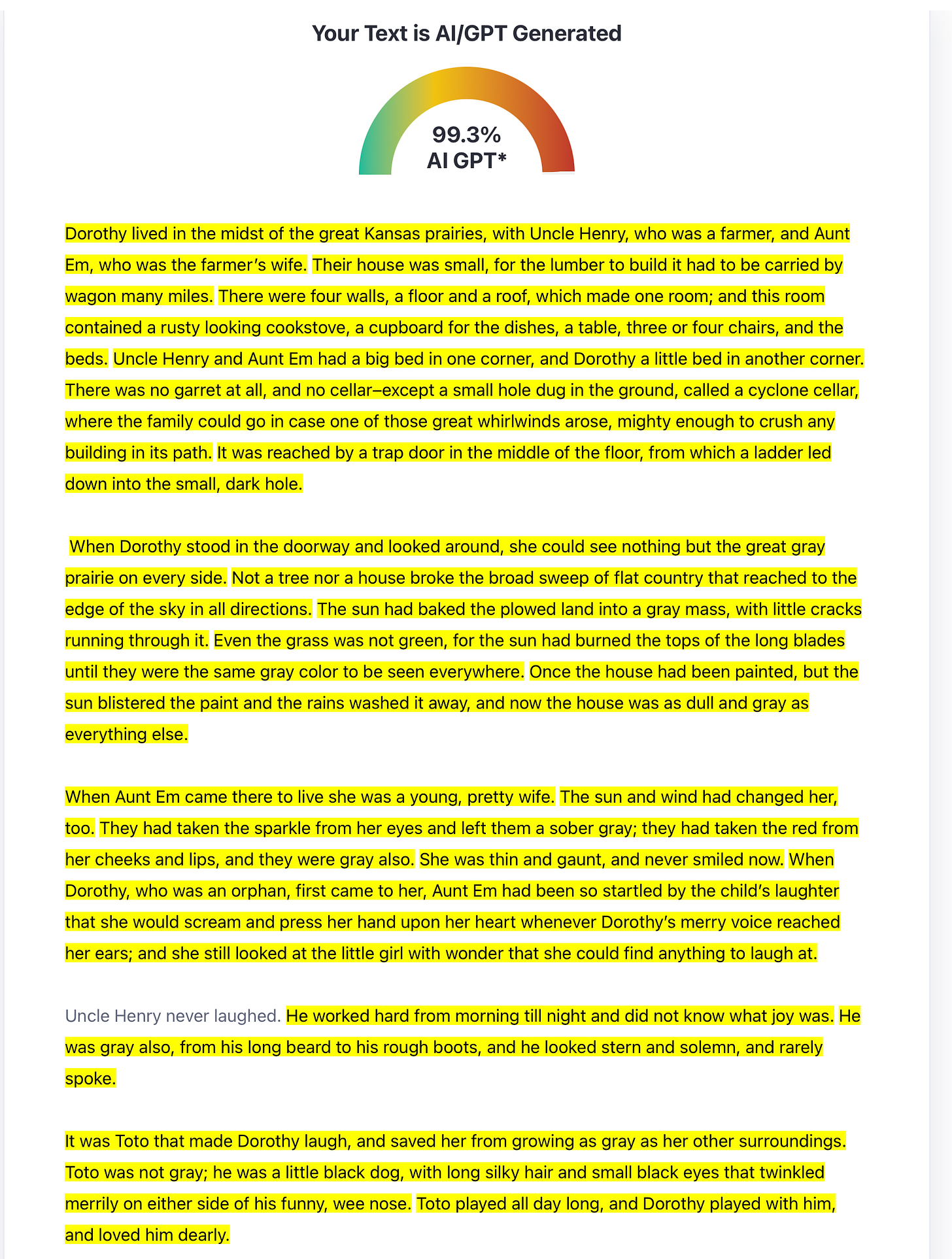

The Wonderful Wizard of Oz (1900) by L. Frank Baum was analysed as 99.3% AI/GPT generated4 (see screenshot below);

Pride and Prejudice (1813) by Jane Austen was analysed as 60.5% AI/GPT generated5;

What I don’t understand is that such an AI detector doesn’t recognise these works as being in the public domain, meaning they can easily be found on the internet. To test this, I copied and pasted the first paragraph from the above-mentioned books in Kagi, which correctly identified their origin every time.

Nevertheless, what this small sample (I didn’t feel like testing one hundred or even more opening paragraphs of public domain books) shows is that Zero GPT simply cannot be trusted to detect if a text is written by humans or not. It completely got 4 out of 10 wrong, and got 3 out of 10 partly wrong. Basically its score was 30%, which is a fail in any exam worth its salt.

So can experienced human editors achieve a better score? I would surely hope so, yet there is a whole world of difference between bad prose and AI-generated prose. Obviously, these may overlap. So I put the first couple of paragraphs of Fifty Shades of Grey into Zero GPT: 20.5% AI/GPT generated. Note that I copied these paragraphs from the Vintage Books edition of April 2012, which implies two things:

LLMs did not exist at the time, so E. L. James wrote this herself;

One assumes and hopes that an in-house editor (or a hired one) has gone over the text;

And still Zero GPT thinks 20.5% is AI/GPT generated. I’ll go out on a limb and say that even the best human editors will not be able to say with 100% certainty if a text is AI-generated. It may be purple prose, it may be bad or just plain mediocre writing (which can still be a bestseller: see E. L. James and Dan Brown6), or it may indeed be AI-generated (more than one can be true).

But proving if a text is AI-generated in a court of law will be, I suspect, impossible. The complainant may have used an AI detector tool—which they then need to disclose—and then it will be trivially easy for the defence to produce false positives (using books written before LLMs existed). And trying to convince a jury (or a judge in EU courts) through expertise is a lost cause. Just try to prove that a piece of bad writing is AI-generated and not just purple prose—good luck with that.

Therefore, I think we should be very careful before we accuse anybody of using AI-generated text (I’m talking fiction here, as non-fiction is a whole different kettle of fish, and equally different to (dis-)prove). Meaning the only things an acquiring editor can do are either:

Let the author state in writing that they did not use AI when writing their story7;

Do not purchase the story when the suspicion won’t go away (err on the side of caution);

But I cannot state this enough: mediocre prose can still sell like hotcakes—see Fifty Shades of Grey and The Da Vinci Code. In the end it’s a matter of taste and—roughly speaking—more people eat hamburgers than they do Beef Wellington.

So I’ve established that AI detector can throw up false positives. Can it also conjure false negatives?

More on that in AI Detection, Part 2: can a thief recognise a thief?

Support this writer:

Like this post!

Re-stack it using the ♻️ button below!

Share this post on Substack and other social media sites:

Join my mailing list:

Author’s note: I’ve been under the weather the past week, so have been mostly offline (while scheduled posts appeared automatically). Feeling a bit better, now. Welcome to a new subscriber and many thanks for reading!

Most of the reactions on the Shy Girl controversy centre on the first couple of paragraphs, so I think this is a fair tit for tat;

So I guess Mark Twain was way ahead of his time;

So Lewis Carroll was even further ahead of his time;

L. Frank Baum must have had a time machine;

Jane Austen must have used a prototype of Charles Babbage’s Difference engine;

Alright, according to Zero GPT the prologue of “The Da Vinci Code” (2003) is 61.1% AI/GPT generated;

This is already fairly standard practice in submission platforms like CW, Moksha and others;