The Replicant, the Mole & the Impostor, Part 6

Part 2—the conclusion—of a duology where a reality event held in a refugee camp on a Greek island unfolds in an utterly unexpected manner. There will be 50 parts. Chapter 6: January.

—At the Lab—

The researchers at their monthly meeting cannot escape the fast-moving developments in the refugee camp. Some, indeed, have purchased AR-glasses in order to get a feeling of how things work in that realm.

“Isn’t it getting more interesting by the week, or even day?” Manfred Kafka says, showing his AR-gear. “Just in order to keep up with our candidates, we need to follow them in AR-space as well.”

“Can’t we create an app for that,” Jennie Richards says, “that can then filter out the replicant?”

“Use a thief to catch a thief?” Manfred Kafka says. “I like your thinking.”

“But if we use a machine to catch a machine,” Themba Letesha says, “aren’t we making ourselves obsolete?”

“You and your common sense,” Manfred Kafka says, laughing, “destroying fun projects since, well, August.”

“Well, there are many possible layers to this,” Rachel Mónica Diaz says. “Like wouldn’t an app smart enough to filter out the replicant be almost as smart as the replicant? And why would that app betray the replicant? Wouldn’t it be more in its advantage to keep it a secret, so that the replicant and—by extension—machine intelligence in general win this contest?”

“Machines, eh?” Léa Truchon says. “You can’t trust them. And I thought I was the conspiracy theory aficionado.”

“I think that figuring out how neural networks actually work—and possibly think, if they perform such a task—needs to come first,” Akira Kobayashi says, “before we can ascribe something like agency to them.”

“Isn’t ‘agency’ what we program into them?” Jennie Richards says. “Iin that regard, I’ve given some thought about the three ‘Principles of Russell’ developed by Stuart Russell. Like Asimov’s ‘Three Laws of Robotics’, they beg you to look for loopholes.”

“Here’s a quick reminder:

The machine’s only objective is to maximize the realization of human preferences;

The machine is initially uncertain about what these preferences are;

The ultimate source of information about human preferences is human behavior;

“I suspect both sets are meant to give everybody food for thought, not as actual working principles,” Rachel Mónica Díaz says. “And only be implemented when all the kinks have been thought out.”

“I wouldn’t be so sure about Russell’s proposed principles,” Akira Kobayashi says, “as they are already being applied.”

“Uh-oh,” Jennie Richards says. “What could possibly go wrong?”

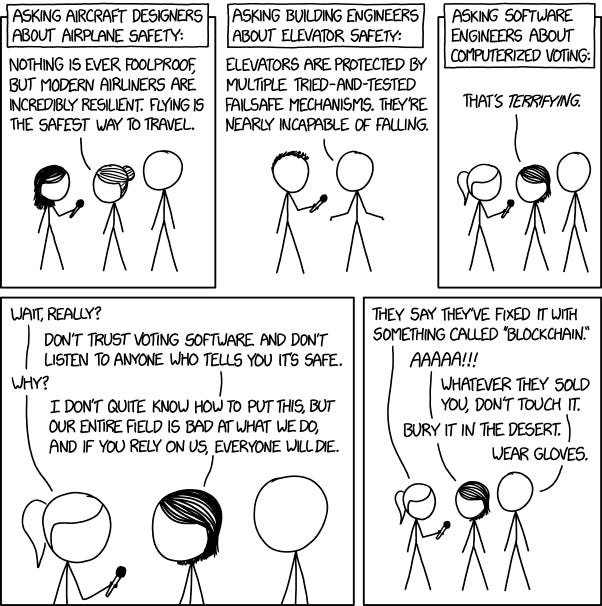

“Unfortunately, the XKCD comic about the safety of airplanes, elevators, and voting machines is all too close to reality,” Akira Kobayashi says. “As software programmers do not test their own code thoroughly, software testers are laid off to save money, so that the public is basically becoming beta testers.”

“In that case, my addendum to them has only become more urgent,” Jennie Richards says, “since I haven’t seen ‘human preferences’ very well defined. To be blunt: some people would gladly prefer to let others—even machines—make all the decisions for them, as long as they’re fed and can watch TV. Some people prefer that other people suffer, especially if these others suffer for their benefit. I could go on.”

“Well, principle number two gives our AI leeway to determine what actual human preferences are,” Rachel Mónica Diaz says, “and refine it along the way.”

“Which can be a recipe for disaster,” Jennie Richards says. “Imagine our AI trying to maximize the preferences of an online, rightwing hate group: it might become just as bad—or worse—than them. Remember Microsoft’s AI chatbot Tay? Offhand, I can think of several more similar scenarios.”

“So what do you propose to do about it,” Themba Letesha says, “except for test thoroughly?”

“Provide a very solid baseline definition for human preferences,” Jennie Richards says. “One that doesn’t give idiots on Twitter, Instagram, TikTok and Facebook one nanometer, let alone an inch, of leeway.”

“Don’t let the unwashed masses get wind of your proposal,” Manfred Kafka says, “or they’ll scream blue murder that the experts are deciding all the important matters for them.”

“The same masses that elected climate change deniers as prime minister in my home country, several times in a row?” Jennie Richards says. “After which half the country got singed by the worst bushfires ever, followed by floods of—oh, well—biblical proportions.”

“The same hypocrites that complain about experts,” Léa Truchon says, “but would think twice before they’d let—say—a car mechanic perform open heart surgery on them?”

“Closer to home,” Rachel Mónica Diaz says, “where people from a conservative religion quickly judge an outspoken, extravert western lesbian, and then—once they experience how good she is—gladly use her services in AR-space?”

“Well, at least many of these refugees are willing to change their minds,” Jennie Richards says. “I’m not so sure about a lot of others, even as they witness what the experts warned them for first-hand.”

“Nevertheless, what should the baseline for human preferences be?” Manfred Kafka asks. “Not being filthy stinking rich, I suppose.”

“Why not?” Themba Letesha says. “Then the robots will work—literally tirelessly—to create a post-scarcity society, where everybody gets a billion dollars, as money becomes useless, anyway, at that point?”

“Which would prove the adage ‘money doesn’t buy you happiness’ pretty stat,” Manfred Kafka says, “as most people won’t know what to do with themselves—this includes a considerable segment of current billionaires, BTW. They’d be bored to death, get addicted, or commit suicide.”

“On the one hand, it would greatly thin the herd and relieve the strain humans put on this planet’s ecosystems,” Rachel Mónica Diaz says, “but on the other hand, we’re ethical scientists, not catalysts to genocide. So what do you have in mind, Jennie?”

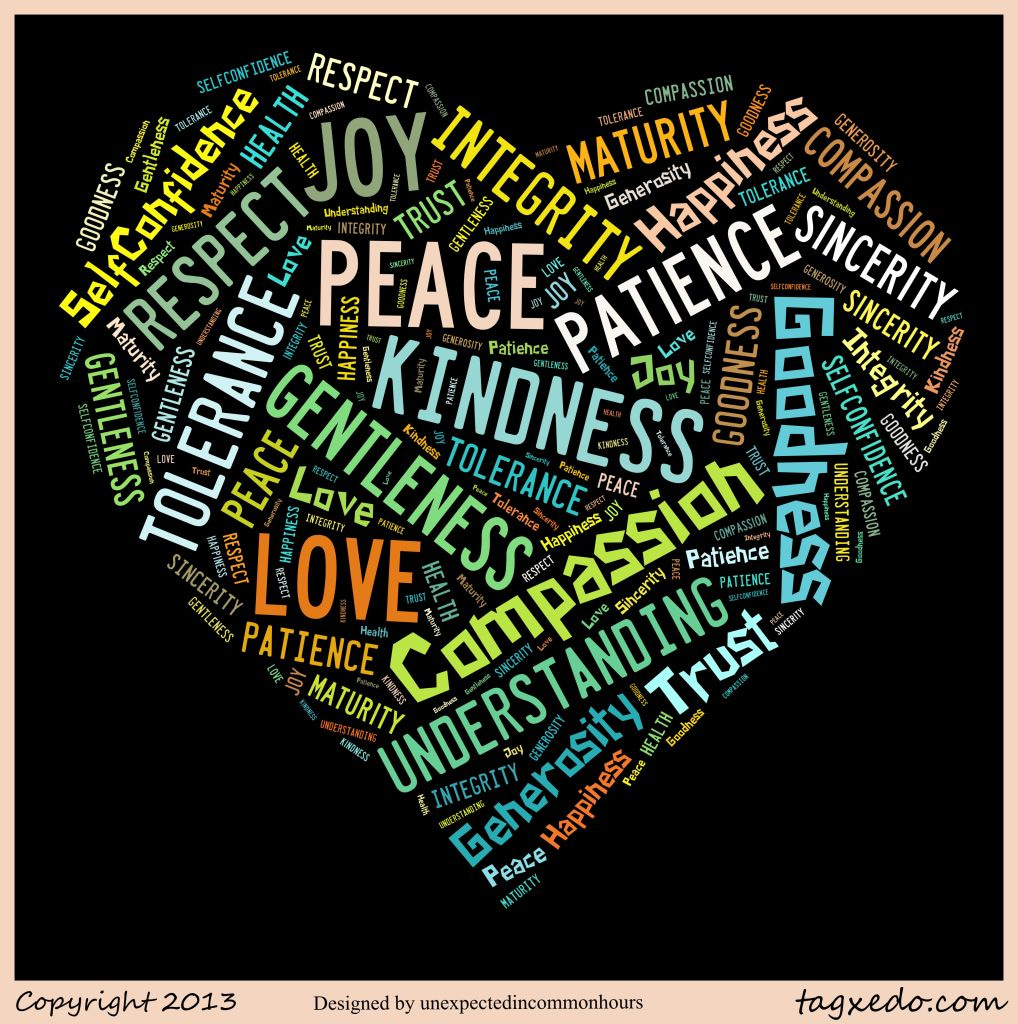

“It’s just a framework,” Jennie Richards says, “meant to give our AI a basic reference. Without that, it can basically become anything—as already demonstrated—and this is meant to guide it into the right direction. A starting point, definitely not the final word, and I welcome your comments. Please see my thoughts on the doc in our shared cloud.

“The most basic rule is the easiest to come up with, but the hardest to define: Happiness of the many above the happiness of the few. Which leaves us to find a foolproof definition of ‘happiness.’ Tough one.

“The other rules are based on ten basic human values—in order of importance: love, kindness, honesty, openness, peace, justice, respect, equality, loyalty, detachment, in the sense of willingness to let go of matters, and respect. Roughly speaking, I’ve given them the following hierarchy:

1. love above kindness;

2. honesty above openness;

3. peace above justice;

4. equality above loyalty;

5. detachment above respect;

“In case there is no clear preference, then there should be no action from the AI (no choice better than a wrong choice; also a ‘freezing’ AI tells a human that they’ve not made their choices clear);

“Of all these human values, giving them goes above receiving them, hence:

1. love given above love received;

2. kindness given above kindness received;

3. loyalty given above loyalty received;

4. peace given above peace received;

5. respect given above respect received;

6. honesty given above honesty received;

7. openness given above openness received;

8. equality given above equality received;

9. detachment given above detachment;

“With the single exception of justice: justice received above justice dealt.

“Finally, I thought about including a rule like long term improvements above short-term improvements, but decided against it because A, again, the definition problem, but mostly B, if we set up the above right, then long-term acting should follow eventually. Again, I welcome your comments.”

“Some very interesting ideas, Jennie, and I think we should produce a joint paper on them,” Manfred Kafka says, “as I agree it may be of vital importance. However—sorry to play devil’s advocate—what does this have to do with our quest in unmasking the replicant?”

“For one, it teaches us to think like the other,” Jennie Richards says. “If we have a decent understanding of our replicant’s programming, we should be able to see that in its behavior. I would certainly welcome Akira’s input in that. For another, if the candidates of this reality event getting out of hand can deviate from their prime directives, we are certainly allowed a few instructive distractions of our own?”

“Definitely,” Rachel Mónica Diaz says. “Some of my own ‘instructive distractions’ have eventually led to some of my best work.”

“Which—for some reason—gets me to wonder about the agency of our replicant,” Léa Truchon says. “Would a replicant initiate—or even lead—a revolution like this?”

“I suspect not,” Manfred Kafka says, “as its programming would be basically reactive. It might be an early adopter, but not an instigator.”

“Just look at Katja, she went on fire once the true injustice of the camp’s situation became fully clear to her,” Rachel Mónica Diaz says, “and she’s human as can be.”

“Which would put Agnetha firmly on the human side,” Themba Letesha says, “as she’s highly proactive as well.”

“Agreed,” Manfred Kafka says, “but couldn’t that lead to a few false positives? I’m thinking about Rahman and Akama, for instance.”

“Good point,” Jennie Richards says, “as most humans aren’t innovators, either. Therefore, a truly smart AI—lacking the agency to start something truly new, yet having literally all the databases in the world available to tell it when that happens—would be a lightning quick early adopter, right?”

“Like Dewi,” Léa Truchon says, “who immediately started to set up solid—and quite elaborate—plans to forward Katja’s ideas.”

“Which might be because of her academic background,” Manfred Kafka says. “Also like the way Omar will always be there for his African friends, despite them being way ahead of him.”

“Which is to be expected from a man from Paris’s banlieues,” Léa Truchon says, “who has African ancestry.”

“So you two are implying that Omar and Dewi are the most likely candidates for the replicant?” Themba Letesha says, cutting to the heart of the matter.

“Basically, yes,” Manfred Kafka admits.

“We should put them on the top of our list, every time,” Léa Truchon says, “that way we’ll find out soonest.”

“Not Agnetha, whose AR-performance is surely superhuman?” Themba Letesha—the common-sense person of the group—says.

“Some people just have those abilities,” Manfred Kafka says, “like the chess grandmasters (and their checkers counterparts) of yore, who could play against more than ten other players at once. It’s not impossible.”

“Not Rahman,” Themba Letesha says, “who often acts like a fish out of water?”

“Well, that basically is Rahman,” Manfred Kafka says, “as I strongly suspect he left his native Bangladesh out of poverty, yet misses it every day of his life. I’m certain that if he could make the same money in Dhaka, he’d be back without a second thought. If he is the replicant, I will eat my lederhosen.”

“Not Akama,” Themba Letesha says, “who always seems to keep a certain distance?”

“I’m willing to bet good money that Akama’s got a skeleton in his closet,” Léa Truchon says, “nothing serious, mind you—otherwise the show’s producers have not done their pre-checks very well—but something he is quite upset about.”

“And what about Olga?” Themba Letesha finishes the list.

“A dark horse,” Léa Truchon says, “although I suspect her aloof bait and empathy switch game is a survival trait from her journalist days in Russia.”

—At the Residence—

After last month’s New Year’s extravaganza, the residence has reverted to its normal, more reserved self. January has been chilly, muddy, and wet. Yet it went by pretty fast, as everybody has been so busy. Time flies if you’re having fun? Well, while everybody surely has been busy, not all of them have had fun. Unless you define work as fun, and you’re called Piotr. Or Kristel, who’s been beaming ever since she introduced pets in the camp. But the rest have—after their initial hangover faded—been working themselves into exhaustion like maniacs. Maniacs with a cause, even if the direction they’re going in is unclear.

No formal dress tonight, as everybody’s just wearing what they feel comfortable in. Also Hanneke van Geraardsbergen and Michael Thuisloos wear jeans and T-shirts as if to show that things have reverted back to normal—that is, whatever version of normal is happening this month.

“Here we are again,” Hanneke van Geraardsbergen says, “in the sixth month of this show.”

“The last time we ask the audience and the team of specialists to select a human,” Michael Thuisloos says, “as the number of viewers, followers, and fans has reached three point eight billion, according to our best measurements.”

“Will we break the magic number of four billion?” Hanneke van Geraardsbergen says. “Stay tuned until next month, as I’m sure even more incredible things will happen.”

“But first,” Michael Thuisloos says, “the votes. After this, we will start voting for the replicant, and this show may very well end this February. Or run all the way into May. So let’s see what the people think:

Keep reading with a 7-day free trial

Subscribe to The Divergent Panorama to keep reading this post and get 7 days of free access to the full post archives.